Data has become extremely valuable in today’s corporate organizations. It allows them to improve their customer-related services, business operations, product creation, and decision-making. Organizations now produce daily amounts of data never seen before. Yet, data is needed to provide commercial value—it is not enough to just collect data. Herein lies the application of data engineering. The important task of designing, building, and managing the systems and procedures is data engineering. These systems that gather, store, process, and convert data into a format are also data engineering. It includes Data intake, data integration, data modeling, and data storage.

For any business to extract value from its data assets in the current data-driven environment, data engineering is essential. Organizations make better decisions based on insights from their data by using a well-designed data engineering system. These systems can enhance data quality and shorten processing times. IDC’s most recent findings indicate that the global data sphere will grow to an astounding 175 zettabytes in a few years, and that number will skyrocket to 491 zettabytes by 2027. The exponential growth of data highlights the vital role that data engineers play. Especially in organizing, analyzing, and extracting value from this massive amount of information, underscoring their indispensability in the modern digital economy.

What is Data engineering?

An essential discipline that helps businesses manage and use their data assets efficiently is data engineering. It consists of planning, building, and maintaining the infrastructure. This is needed to make it easier to extract, convert, and load (ETL) massive amounts of data from several sources into a format that is appropriate for analysis. The market for big data analytics worldwide was estimated to be worth $307.51 billion in 2023 and is expected to increase to $924.39 billion by 2032 from $348.21 billion in 2024.

.

Data input, processing, transformation, and storage are some responsibilities of data engineers. They need to comprehend their data requirements and create reliable data pipelines that can handle data at scale. For this to work better, data engineers need to work closely with data scientists and analysts using modern tools like ETL frameworks, databases, data warehouses, and data lakes. This allows data engineers to efficiently manage and process data from various sources.

Data engineers play an important role in the success of any data-driven business. Because of their specific knowledge and abilities. By creating and executing scalable and effective data pipelines, businesses can extract meaningful insights from their data. Enabling them to make well-informed business choices.

Let’s dissect it even more:

- 1. Data: There are several kinds of data in data engineering solutions, including inventories, sales figures, product details, supplier information, personnel scheduling and productivity (work hours, shifts, and schedules), and customer information.

- 2. Data organization: Data engineering arranges data. It organizes and structures data to make it practical and accessible.

- 3. Data processing: ETL (Extract, Transform, Load) is a procedure used in data engineering. information’s similar to gathering data for analysis by extracting information from multiple sources, converting it into a format that can be used, and then loading it into the appropriate location.

- 4. Data systems: Systems and frameworks govern data processing and utilization in data engineering. Consider them to be the detailed guidelines for managing data.

- 5. Data analysis: Because your data is now well-processed, analysts and data scientists can use the processed data to derive insights and useful information.

- 6. Optimization: Data engineers continuously optimize their processes, to get better results. They may discover methods to speed up, improve, and enable more flexible data processing in response to shifting requirements.

To put it briefly, there are various types of data engineers who work behind the scenes to make sure that all the ingredients (data) are prepared, well-organized, and ready for the analysts to create something awesome.

Book Your Free Data Strategy Now & Start The Transformation Today

What Function Do Data Engineers Serve?

To make the raw data accessible and usable, data engineers assist in managing it. Data engineers are skilled in transforming unstructured and raw data into valuable insights and moving data across locations without affecting it. The various types of data engineers can be divided into three main categories:

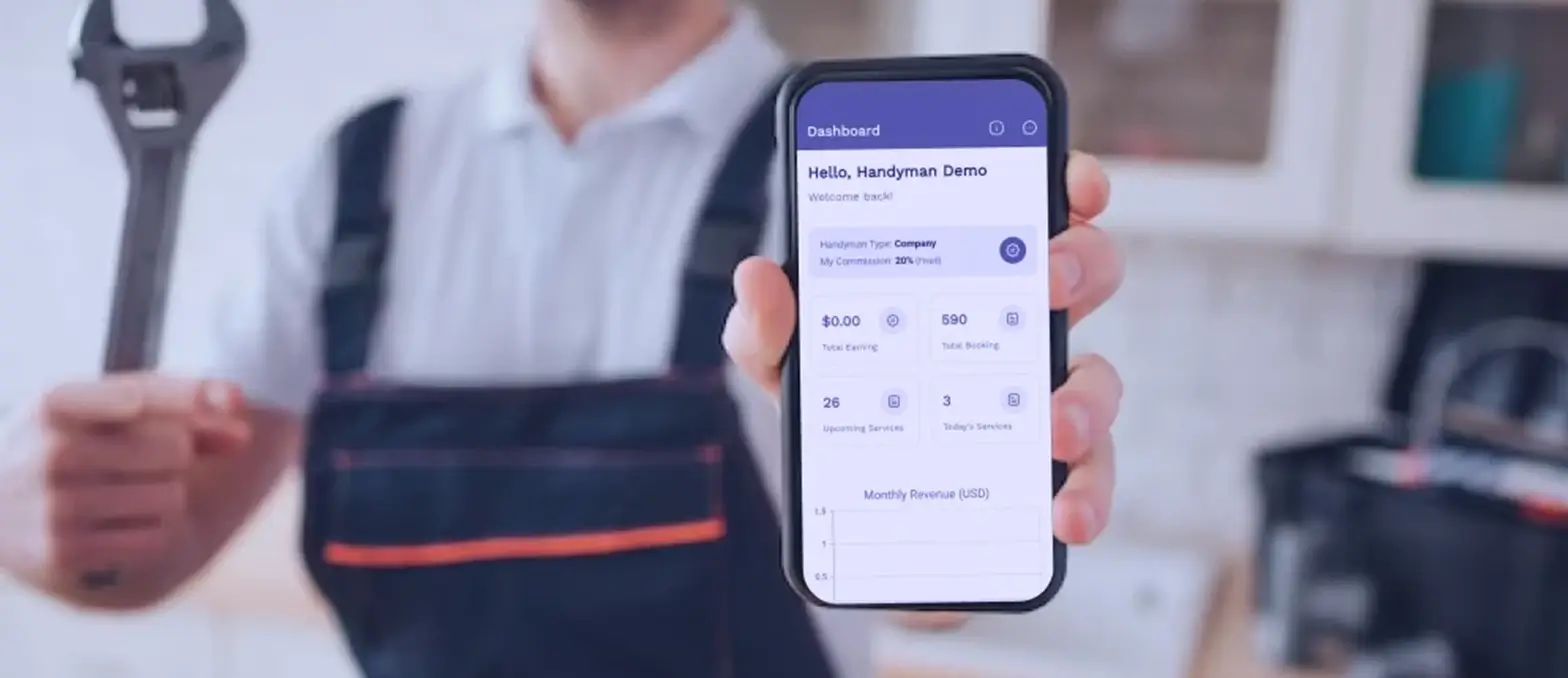

1. All-arounder

Generalists typically work in small data engineering companies or on small teams. Here, data engineers—among the few individuals who are “data-focused”—perform a variety of duties. Generalists are frequently in charge of handling all aspects of the data process, including administration and analysis. Since smaller firms won’t have to worry as much about building “for scale,” this is a nice option for someone comparing data engineering vs. data science

2. Pipeline-centric

Found in data engineering consulting services, pipeline-centric data engineers assist data scientists in utilizing the information they gather by collaborating with them. Pipeline-centric data engineers require “in-depth knowledge of distributed systems and computer science.”

3. Database-centric

Data engineers concentrate on databases and data analytics tools at larger companies where overseeing the flow of data is a full-time position. Database-centric data engineers create table schemas and work with data warehouses that span several databases.

What is the role of a data engineer?

To become a certified data engineer, one must possess a specific set of data engineering tools and technologies, such as:

Apache Hadoop and Spark

Using straightforward programming concepts, the Apache Hadoop software library gives users a foundation for handling massive data volumes across computer clusters. The program is designed, so you can connect to hundreds of servers, each of which can store data. The platform supports several programming languages, including R, Java, Scala, and Python.

Even while Hadoop is a crucial tool for big data, its processing speed is a major source of concern. With Apache Spark, you have access to a data processing engine that is nearly as powerful as Hadoop and enables stream processing—a use case where data must be continuously input and produced. Batch processing, which Hadoop employs, collects data in smaller batches so that it may be processed in bulk later. This can be a laborious procedure.

C++: Because it is a general-purpose language, C++ is easier to use, allowing for easy management and maintenance of data. It also offers real-time analytics, making it an effective tool.

Database architectures (NoSQL and SQL).

Relational database systems are created and maintained via SQL, the standard computer language (these tables have rows and columns in them). NoSQL databases are those that are non-tabular, meaning they do not contain any tables. They come in various forms based on their data model; for example, they could be a document or a graph. Data engineers have a responsibility to be proficient in database management system (DBMS) manipulation. It is a piece of software that gives databases an interface for information storage and retrieval.

Data warehouse

Data warehouses are vast storage repositories that hold a variety of historical and current data sets. Various data sources include ERP systems, accounting software, and CRM systems. In the end, the data engineering services utilize this data for data mining, reporting, analytics, and other business-related activities. Data engineers are needed to be conversant with Amazon Web Services, or AWS. They need to be familiar with numerous data storage solutions and cloud service platforms.

Tools for extracting, transferring, and loading data – ETL

ETL is the process of taking data out of a source and transforming it into a usable format so that it may be analyzed and stored in data warehouses. “Batch processing” is used to analyze data that complies with a specific business query, which benefits the end user. ETL gathers information from various sources, applies a set of rules based on business needs, and then loads the converted data into a database or business intelligence platform so that it is always accessible to all members of the organization.

Machine learning

Models are another name for algorithms in machine learning. These models are the only ones that allow data scientists to forecast situations found in data, whether it be historical or present. Because it makes it easier for them to meet the needs of data scientists and the company, data engineers are expected to have a basic understanding of machine learning. Building and producing data pipelines that are more accurate thanks to these models falls within the purview of data engineers.

Data APIs

An API is an interface that software programs utilize to access data. An API enables communication between several computers or applications to complete a certain activity. Let’s take web apps as an example, which enable front-end users to interface with back-end data and functionality through the usage of APIs.

The API enables the program to access the database as soon as a request appears on the website, extract data from the necessary tables in the database, process the request, and reply with an HTTP-based web template that is ultimately shown on the web browser. To facilitate data analytics solutions and querying by data scientists, data engineers create these APIs within the databases.

Programming languages

Python and Java: Python is without a doubt the most popular language for data modeling and statistical analysis. The majority of data architecture frameworks and their APIs are made for Java due to its widespread popularity.

A fundamental comprehension of distributed systems

Hadoop is expected to be well-versed by data engineers. A framework that enables the distribution of processing of massive data sets to computer clusters with simple programming paradigms is part of the Apache Hadoop software library. Its architecture allows it to scale from a single server to thousands of computers, each of which may do local processing and store data. Apache Spark is the most popular tool for data science programming. The language used to write it is called Scala.

Understanding of algorithms and data structures

Although data engineers typically concentrate on data filtering and optimization, a basic understanding of algorithms is necessary to comprehend how data functions in an organization as a whole. In addition, knowing these concepts makes it easier to define goals and milestones and to overcome business-related roadblocks.

What is the difference between data engineering and related fields like data science and data analysis?

| Aspect | Data Engineer | Data Scientist | Data Analyst |

|---|---|---|---|

| Primary Role | Lay foundation, construct data architecture | Envision and design data structures, develop models | Analyze data, provide insights, and decision support |

| Responsibilities | Build data pipelines, manage databases | Develop machine learning models, analyze complex data | Summarize data, conduct exploratory analysis |

| Focus Area | Data infrastructure, ETL processes | Statistical and machine learning techniques | Data visualization, statistical analysis |

| Tools/Languages | Data infrastructure, ETL processes | Python, R, statistical software | Excel, SQL, statistical software |

| Skill Requirements | Strong in database management, ETL processes | Proficient in statistics, machine learning algorithms | Analytical thinking, data visualization |

| Outcome | Robust, scalable data infrastructure | Insights for decision-making, predictive models | Understandable data presentation, decision support |

Data engineering role in the data lifecycle

Let’s provide some real-world examples of how data engineering solutions enhance decision-making and business intelligence. Let’s use three examples: manufacturer, retailer, and healthcare facility.

Data engineers create pipelines to collect data from online transactions, point-of-sale systems, and customer databases. This is done for retail companies who want to monitor sales performance and customer behavior. Online transactions, point-of-sale systems, and customer databases provide the dataset retail corporations use to make decisions. In the case of a manufacturing corporation seeking to optimize its supply chain, data engineers would create systems to gather and handle sensor data from production lines, inventory databases, and even logistics systems. After this dataset was gathered, business intelligence tools and analysts would be better equipped to locate bottlenecks, maximize inventory, and—above all—improve supply chain effectiveness.

To enhance patient outcomes and operational efficiency, a healthcare provider would require a data engineer to construct data-gathering pipelines from electronic health records, patient management systems, and medical devices. With the use of this dataset, the healthcare provider would be able to learn more about issues like evaluating and enhancing patient satisfaction and experience, patient treatment effectiveness and outcomes, optimizing resource allocation, forecasting and preventing disease onset, finding opportunities to improve hospital operations, comprehending cost drivers and revenue opportunities, etc.

Considering the aforementioned instances, a data engineer’s responsibilities throughout the data lifecycle encompass:

- 1. Data collection: This entails creating and running systems to gather and extract information from various sources. Social media, transactional databases, sensor data from Internet of Things devices, documents, maps, texts, photos, sales data, stock prices, and more could be some examples of these sources.

- 2. Data storage: Making sure that information is arranged for easy access and storing massive amounts of data in data lakes or warehouses.

- 3. Data processing: Establishing dispersed processing frameworks to purify, compile, and modify data so that it is prepared for examination.

- 4. Data integration: Building pipelines to combine data from multiple sources to get an all-encompassing view.

- 5. Data governance and quality: Making sure that data is trustworthy, dependable, and meets legal requirements.

- 6. Data provisioning: Making sure applications and end users can access the processed data.

Transform Data Into Decisions With Our Data Engineering Services

What Are The Needed Prerequisites To Study Data Engineering?

First and foremost, having a strong programming base is important. Writing scalable and effective code for data engineering tools is possible if you are proficient in languages. Especially languages like Scala, Java, or Python. It is essential to comprehend ideas like variables, data types, loops, conditional expressions, and functions.

- Another need for data engineering is familiarity with databases and SQL. It is essential to understand how to use relational databases and write SQL queries. This will extract, alter, and manage data. It is handy to be familiar with non-relational databases and technologies like Apache Cassandra and MongoDB.

- Optimizing data processing and storage requires a fundamental understanding of data structures and algorithms. It enables you to select the ideal data structures and algorithms for efficiently managing massive amounts of data. Additionally useful is knowledge of distributed systems. Scalable data engineering solutions must take into account concepts like data segmentation, fault tolerance, and parallel processing.

- More and more businesses are implementing cloud data solutions. Therefore familiarity with cloud platforms and services such as AWS, Azure, or Google Cloud is becoming important. Working with cloud storage, computing resources, and data processing tools will be advantageous in the field of data engineering.

Last but not least, you can overcome difficult data engineering problems with a strong analytical approach and problem-solving abilities. Performance optimization, data quality assurance, and large-scale data handling are common tasks in data engineering. The ability to assess issues, dissect them into digestible parts, and come up with workable solutions is essential.

Although these requirements offer a basis for understanding data engineering. It’s important to keep in mind that practical experience and further education are needed for success in this ever-changing field. It’s necessary to stay current with emerging trends and innovations.

The Skills Needed to Work in Data Engineering

A professional in data engineering must possess several critical abilities to succeed in the industry. The foremost is to have a solid programming base. Data extraction, transformation, and loading (ETL) procedures are examples of data engineering operations. These processes need proficiency in languages like Python, Java, or Scala.

A database-related competence is another necessity.

Understanding relational databases such as SQL as well as non-relational databases like MongoDB or Apache Cassandra is vital. Especially for effectively managing and modifying substantial amounts of data.

Data warehousing principles and technologies.

Data engineers must comprehend the concepts and tools of data warehousing. It’s critical to comprehend how cloud-based platform data warehouse architectures are designed and implemented. utilizing technologies like Apache Hadoop and Spark, along with alternatives like Amazon Redshift or Google BigQuery.

Proficiency with ETL and data integration tools is a must for data engineers. Tools like Talend, Apache Kafka, and Apache NiFi enable the efficient movement and transformation of data between multiple sources and destinations.

Cloud Integration:

Businesses are implementing cloud solutions for their data infrastructure and understanding cloud computing platforms like AWS, Azure, or Google Cloud is becoming increasingly valuable.

Schema Design and Data Modeling:

Experts in data engineering should also be well-versed in schema design and data modeling. This entails having an understanding of database normalization, data modeling methodologies, and creating effective schemas for data storage and retrieval.

Lastly, resolving problems, maximizing performance, and guaranteeing data integrity and quality all require excellent problem-solving and analytical abilities. By gaining these abilities, people can establish themselves as skilled data engineers who can successfully manage and process large-scale data infrastructures, despite their many obstacles.

Difficulties with Data Engineering

Even if the area of data engineering tools is expanding, there are still certain difficulties that data engineers must overcome:

- -Data Quality Problems: Managing large amounts of data is harder as data volume rises. This is where the problems with data quality arise because there is a lot of inconsistent data being generated from a variety of sources. As a result, a data engineer’s task is to clean up and give the data scientist pertinent data.

- -Testing of Data Pipelines – Huge costs for the company could result from even the smallest breach.

- -Context Switching: Occasionally, running an ETL task can take a long time, and problems will undoubtedly surface while the job is running. As a result, switching to the next iteration and getting back into the mentality becomes challenging.

- -Alignment: Since data engineers are the cornerstones of any data engineering vs. data science project, inconsistencies in the data may cause issues. It’s essential to establish alignment and consistency in a huge business.

The Trends and Future of Data Engineering

Data engineering is about to undergo a radical makeover as a result of the rapid breakthroughs in technology, which now include serverless computing, artificial intelligence solutions, machine learning, and hybrid clouds, among other things. In the upcoming years, there will be a rise in the utilization of big data and data engineering tools. We are seeing a transition towards “real-time data pipelines and real-time data processing systems“. This is due to the change from batch-oriented data movement and processing to real-time data movement and processing.

- – Data warehouse can accommodate data marts, data lake engineering services, or basic data sets depending on requirements.

Future developments in the role of data engineer are expected to occur in the following four areas:

- -Batch to Real Time: Database streaming is becoming a reality as change data capture solutions quickly replace batch ETL. These days, real-time ETL operations are carried out.

- -Improved communication between the data warehouse and data sources

- -Data engineering enables clever tool-based self-service analytics

- –Data science tasks are automated

- -Hybrid data architectures that straddle cloud and on-premises settings.

Trends in Data Engineering:

AI-Powered Progress

Artificial intelligence is being used more and more to automate repetitive activities and manual labor. In light of this, data engineers can employ AI to handle tedious activities related to quality assurance. They will be able to concentrate more on their primary skills, which include problem-solving and software creation.

The role of data engineers is to teach AI to code by using behavior-driven and test-driven development methodologies, in addition to automating repetitive operations. Data engineers will be able to concentrate on other facets of their work while still maintaining the quality of their code.

Program Development

The newest superstars in the field of software engineering are data engineers. For certain tasks, they employ many of the same tools that software programmers do. They face the same difficulties as software engineers, such as creating and managing data pipelines.

The primary distinction between software engineers and data engineers is that the former are experts in handling data. This implies that they are frequently in charge of gathering and keeping track of data from multiple sources, online protocols like HTTP/3, or blockchain technology. They collaborate with both internal and external systems to gather data to enhance and develop new goods and services.

Automation of Data Engineering

Although the field of data engineering is expanding, it still has to keep up with how quickly the world of data is changing. New technologies for agile data engineering are being developed to handle the data pipeline’s repetitive chores. These solutions streamline a lot of manual work which frees up data scientists to concentrate on applying automation and machine learning for problem-solving.

By automating DevOps processes like automation, continuous delivery, and agile development, data operations technologies also facilitate this process. Enhancing agility and lowering errors will eventually boost productivity throughout the entire company.

Leverage The Expertise We Have in Data Engineering

Conclusion

Data engineering is the process of developing, planning, and constructing data pipelines as well as transforming data to improve user experience for data scientists and big data engineers. To become an insight data engineer, one must possess a particular set of abilities and background, such as SQL, programming, Apache, Azure, ML, etc. Data engineers are in charge of creating data sets, data modeling, data mining, and data structures.

Before processing data & models, data engineers collaborate closely with data scientists, and data analysts to reform data. Big data is commonly stored in data lakes and warehouses. Whereas data warehouses aggregate organized, filtered, and processed data, data lakes are enormous repositories of unstructured, raw data.

A few of the main difficulties in data engineering are context switching, data leakage, and problems with data quality. Even so, the discipline of data engineering is reportedly in high demand worldwide. It is one of the best careers, and the pay for data engineers has been steadily increasing. Since data engineering offers real-time data processing and analytics, its future is very dynamic. Big data and other data science technologies will be widely used in the future.

FAQ

What does a data engineer do?

The task of transforming unprocessed data into information that a company can comprehend and utilize falls to data engineers. They combine, test, and optimize data from multiple sources as part of their work.

Describe data engineering and provide an example.

The role of a data engineer is to source, transform, and analyze data from each system. This is because it increases its value and accessibility for users. For instance, information kept in a relational database is organized into tables, much like it is in an Excel spreadsheet.

Describe data engineering competencies.

Building data infrastructures is the responsibility of big data engineers, who also need to have practical experience with big data frameworks and databases like Hadoop and Cloudera. Additionally, they must be familiar with a variety of tools, including Excel, R, Python, Scala, HPCC, Storm, Rapidminer, SPSS, and SAS.

Are ETL and data engineering the same thing?

Data engineers participate in Extract, Transform, and Load (ETL), a step in the data engineering process since they are specialists in preparing data for consumption.

Do data engineers write computer code?

Hiring managers frequently look for applicants with coding experience. Applicants who know the fundamentals of programming in languages like Python have an advantage over others.